A variety of technologies are emerging for tracking emotions via the Internet using techniques such as text-analytics, speech analysis, and video analysis of the face.

Better tools for tracking emotions holds promise for bringing awareness to our inner state through outside feedback. This kind of technology also promises to make it easier to understand how websites, mobile applications, and ads impact the emotional state of users. “The end goal should be to reengineer business to be truly customer-centric, which was infeasible until emotional analytics entered the picture,” said Armen Berjikly, Founder and CEO of Kanjoya, a sentiment analysis service.

Ken Denman, President and CEO of Emotient, which makes emotional tracking technology for the face, said,

Fundamentally our motivation is to accelerate the pace of innovation for consumers, patients, and students, by providing actionable insights faster and more accurately than ever dreamed. This will empower product developers, customer, and patient experience owners to more quickly and accurately understand what is working and what is not.

At the same time, it is important to note that Website owners need to be cautious in the ways they measure, analyze, and store data about the emotional state of users. As previously reported, experimenting on the ways that applications, ads, and Websites impact users could help organizations make the world a better place. But organizations need to be thoughtful in the use of emotional information in order not to alienate users.

As Rana el Kaliouby, Co-founder and Chief Science Officer of Affectiva, which has developed the Affdex service for analyzing the facial expression of emotions explained,

As a company, we understand how critical the data we are collecting is. Our philosophy is no data is ever collected without explicit opt-in. Ever! We also feel a responsibility towards educating the public about what this technology can and cannot do. Facial coding technology can tell you the expression on your face (which a human in the room would have picked anyhow) but it will not tell you what your thoughts are.

Reading into Emotion

Sentiment analysis is based on being able to extract signals of positive and negative sentiment from text, said Aaron Chavez, Chief Scientist at AlchemyAPI, a sentiment analysis service. With more targeted analysis, the goal is not just to look at positive or negative, but to associate it with things in the text. This makes it possible to zero in on specific aspects of a service such as the food being cold, while the waiter was helpful.

This is a challenging problem, and in many cases, it can be subjective. People can come to different conclusions when reading the same text. The biggest challenge tends to be what is unspoken. People don’t always come out and say in the clearest terms how they feel about things, said Chavez. For example, if someone uses sarcasm they may mean the opposite of what they say. Understanding this requires using external information from other sources.

Chavez explained,

You are pulling in external knowledge of the world in order to recognize when what is stated does not line up when what was intended. This kind of analysis can be more specific with a greater understanding of a person. For example, if they have a political affiliation they might be expressing sentiment in what might otherwise be considered a factual statement.

There are different approaches to sentiment analysis. Rule-based approaches to sentiment analysis create complex rule chains for associating text with sentiment. For example, by creating a special rule for recognizing negations such as when someone uses the word “not” in a sentence. The engine needs to be able to recognize that “not good” is associated with a negative sentiment, while “good” by itself reflects positive sentiment.

In contrast, deep learning systems use neural networks to create a framework for analyzing text that can be robust to understanding in a variety of ways.

Chavez explained,

There are so many ways of saying the same thing. That is one of the strengths of deep learning, where you can come up with representations where words and phrases are similar. Some of the older systems that are ruled based are susceptible to not seeing the problem when you make a minor modification to what someone is saying. Deep learning has a robust understanding of language that is suitable to minor different ways of saying the same thing.

There can also be cultural differences, and these kinds of techniques can be more explicit when someone is talking in a slightly different context. Thin is good for smart phones and bad for bed sheets. Chavez noted,

The system needs to use what you know about them to color that sentiment. But you can only take advantage of this when you have access to that person’s history. There is a limit to what you can infer when the system does not don’t know that person’s history. Even if there is access to this history, it can be a challenging problem to solve.

Aggregating this data can make it easier to find more information about what a person means, even though the technology is far from perfect. When you are collecting hundreds of weak signals, they start to coalesce. Any way you can aggregate data, whether it is based on time, location, or a speaker’s history will provide an opportunity for a more reliable signal.

People are using sentiment analysis in a lot of different ways. It is commonly used to understand the voice of the customer where the company can analyze customer interactions and decide whether they are being done well. The technology is widely used for social media monitoring for tracking the progress of new products and deciding whether the latest ad campaign is having an impact on Facebook or Twitter. It is also being used for stock analysis and targeted advertising.

Meanwhile Kanjoya is launching a consumer grade SaaS sentiment analysis service that hooks up to any data source, instantly (and continuously) analyzes it, and automatically provides actionable insights beyond measurement such as promoter/detractor discovery, base-lining and anomaly detection, competitive analysis, and a real-time net promoter score (NPS) analogue that does not require the NPS survey.

Berjikly explained,

We have proprietary data models built over the last decade to help us model how language and emotion are related, including how that changes depending on the context and background of the speaker. We account for all of those in our technology model, enabling us to decipher emotion at greater than human accuracy, without any training by the end user. There are no special technology requirements, we’ve built our products to work immediately, with intuitive user interfaces, and agnostic to the input data.

Berjikly said that Kanjoya’s sentiment analysis technology is predominantly used to help companies get closer to their customer’s wants, needs, and thoughts. “Humans are inherently emotional decision makers, and companies that acknowledge this qualitative side to the equation, and make it a priority to not just understand it, but act to address it, have a major, often unassailable competitive advantage in the customer experience.”

Sentiment analysis technology is likely to get even better over time, noted Chavez. The notion of positive and negative sentiment is a coarse lens to view this information. “Being able to go beyond positive and negative to determine the correct time to take action is going to be more interesting,” he said.

Hearing Emotions

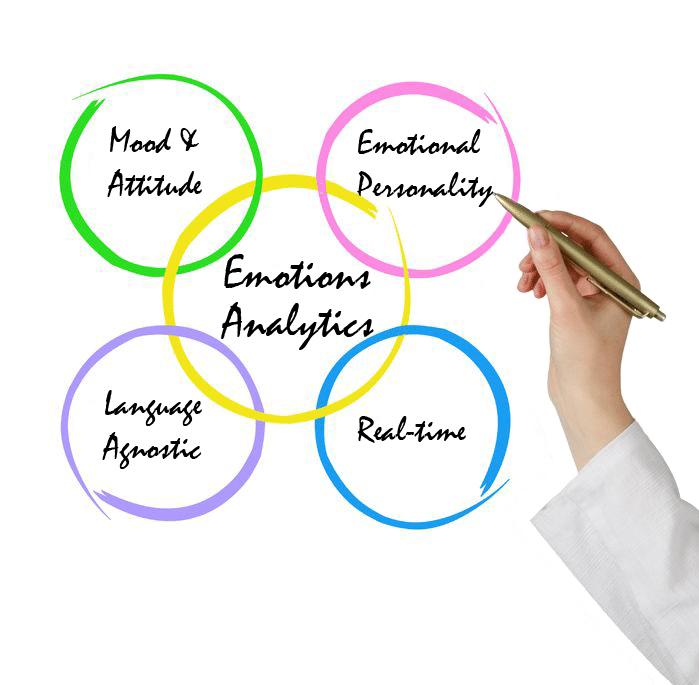

Speech emotional analytics technology work by analyzing our vocally-transmitted emotions in real-time as we speak.

Dan Emodi, VP Marketing and Strategic Accounts at Beyond Verbal, said this kind of technology can decipher three basic things using a microphone and a network connection via a cloud-based application:

- The speaker’s mood;

- The speaker’s attitude towards the subject he speaks about; and

- The speaker’s emotional decision making characteristics more commonly known as emotional personality.

A consumer version of the Beyond Verbal technology is available for the iPhone, Android, and Web browsers.

Understanding emotions is adding what is probably the most important non existing interface today,” said Emodi.

Allowing machines to interact with us on an emotional level has almost unlimited commercial usage from Market Research, to call Centers, to self-improving applications, wellness, media, content and down to Siri that finally understands your emotions. In implementing emotions into daily use it seems we are truly only bound by our own imagination.

Another tool for hearing emotions is EmoVoice, which is freely available as open source software. It uses a supervised machine learning approach that collects a huge amount of emotional voice data for which classifiers are trained and tested. Typically, data is recorded in separate sessions during which users are asked to show certain emotions or interact with a system that has been manipulated to induce the desired behavior. Afterward, the collected data is manually labeled by human annotators with the assumed user emotions. Classifiers that are able to assign certain emotional categories to voice data are computed from this data.

Prof. Dr. Elisabeth André at the University of Augsburg in Germany said techniques for detecting emotions may be employed to sort voice messages according to the emotions portrayed by the caller in call center applications. Among other things, a dialogue system may deploy knowledge on emotional user states to select appropriate conciliation strategies and to decide whether or not to transfer the caller to a human agent.

Methods for the recognition of emotions from speech have also been explored within the context of computer-enhanced learning, added André.

The motivation behind these approaches is the expectation that the learning process may be improved if a tutoring system adapts its pedagogical strategies to a student’s emotional state. Research has been conducted to explore the feasibility and potential of emotionally aware in-car systems. This work is motivated by empirical studies that provide evidence of the dependencies between a driver’s performance and his or her emotional state.

André’s team is also employing techniques for recognizing emotional state for social training within the EU-funded TARDIS project. In this project, young people engage in role play with virtual characters that serve as job interviewers in order to train how to regulate their emotions. This helps them learn how to cope with emotional states that arise in socially challenging situations, such as nervousness or anxiety. The first version used a desktop-based interface in TARDIS, while more recent work focuses on the use of augmented reality, as enabled by Google glass, to give users’ recommendations on their social and emotional behaviors on the fly.

In the German-Greek CARE project, emotion recognition techniques are used to adapt life style recommendations to the emotional state of elderly people.

One of the biggest challenges in teaching computers to recognize a variety of emotions in speech lies in working in natural environments. André said that promising results have been obtained for a limited set of basic emotions that are expressed in a prototypical manner, such as anger or happiness, but more subtle emotional states can be difficult. Also real world environments come with background noise which affects recognition rates.

Another challenge is that people show great individualism in their emotional expression. André explained,

Many people don’t show emotions in a clear manner. Also in some social situations people don’t reveal their true emotions. For example, when talking to somebody with a high status, you would avoid showing negative emotions, such as anger.

Results comparable to human skills have been obtained for tracking a limited set of emotions when the speech is recorded beforehand and analyzed later, said André. Her team is working on improving the technology to analyze emotions in the wild using non-intrusive microphones and for running the software on mobile devices.

Seeing Emotions

Companies like Affectiva and Emotient are also starting to develop technology for quantifying emotional expression through video analysis of facial expressions. For example, Affectiva’s Affdex technology analyzes facial expressions to discern consumers’ emotions such as whether a person is engaged, amused, surprised or confused.

Affdex employs advanced computer vision and machine-learning algorithms within a scalable cloud based infrastructure to identify the emotions portrayed in a face video. Affectiva has also developed SDKs for developing facial emotional analysis applications on both iPhone and Android devices.

Affdex uses standard webcams, like those embedded in laptops, tablets, and mobile phones, to capture facial videos of people as they view the desired content. Affectiva’s el Kaliouby said, “The prevalence of inexpensive webcams eliminates the need for specialized equipment. This makes Affdex ideally suited to capture face videos from anywhere in the world, in a wide variety of natural settings (e.g., living rooms, kitchens, office).”

First, a face is identified in the video and the main feature points on the face are located, such as eyes and mouth. Once the region of interest has been isolated, e.g., the mouth region, Affdex analyzes each pixel in the region to describe the color, texture, edges and gradients of the face, which is then mapped, using machine learning, to a facial expression of emotion, such as a smile or smirk.

Once classification is complete the emotion data extracted from a video is ready for summarization and aggregation, and is presented via the Affdex online dashboard. Expression information is also summarized for addition to a normative database.

Affectiva has amassed about two million facial videos from over 70 countries, which has allowed the company to build a global database of emotion response that can be sliced by geographic region, demographic regions, as well as industries and product categories. This allows companies to perform A/B tests for their content, and also get a better sense of where their ad falls with respect to other content in their vertical or market.

el Kaliouby said,

Today our technology can understand that a smile can have many different meanings – it could be a genuine smile, a smile of amusement, a smirk, a sarcastic smile, or a polite smile. This is where Affdex is at the moment – we’re training the machine that emotions come in many different nuances / flavors. Where we would like to take this in the future is for an emotion-sensing computer to pick on more subtle cues like maybe a subtle lip purse or eye twitch – it will incorporate head gestures and shoulder shrug and physiological signals. It will know if a person is feeling nostalgic, or inspired.

Emotient’s software is designed to work as a web-based service with any video camera or camera-enabled device. This could be a webcam, camera embedded in a smart screen or digital sign, tablet, or smartphone. The software measures emotional responses via facial expression analysis. Emotient’s approach combines proprietary machine learning algorithms, a self-optimizing data collection engine, and state-of-the-art facial behavior analysis to detect 7 primary emotions, including joy, surprise, sadness, anger, disgust, contempt, and fear, as well as more advanced emotional states including confusion and frustration. The system detects all faces within the field of view of each frame and analyzes the facial expression.

Emotient’s co-founders have spent the past two decades innovating an automated emotion measurement technology based on facial expression analysis. Emotient’s team has published hundreds of papers on novel uses for the technology that include the development of autism intervention games that help subjects mimic facial expressions and identify facial expressions in others, measuring the difference between real and fake pain, analyzing student engagement in an online education setting.

As a business, Emotient has chosen to focus our early commercial efforts around advertising, market research and retail, and are delivering emotion analytics to customers as aggregate, anonymous data that is segmented by demographic.

Emotient’s Denman said early adopters of the software are using it to automate focus group testing and to conduct market research for product and user experience assessment.

In the past year we have been working with major retailers, brands and retail technology providers who are using Emotient to compile analytics on aggregate customer sentiment at point of sale, and in response to new advertising or promotions in-store or online.

Customer service patterns and trends can be identified, both for training purposes and troubleshooting in areas of the store where assistance is needed, or as a measure at point of sale or point of entry to determine customer satisfaction levels. The resulting analytics can be used in benchmarking the efficacy of a specific display, shelf promotion, advertisement, or overall customer experience. We believe the real value of the emotion analytics we collect and deliver to retailers and brands is in aggregate information segmented by target demographic, and less so by individuals.

Emotient is working with iMotions, which has built a full Attention Tool platform, which includes other biometrics including eye tracking, heart rate measurement, and galvanic skin response (GSR) for improved academic, market, and usability research. Denman said, “These other biometric signals can be valid and helpful but facial expression analysis provides unique context that isn’t possible to capture otherwise.”

New Mirrors for Emotional Reflection

New mirrors for looking inwards could also help to overcome the blinders to recognizing our inner state. Beyond Verbal’s Emodi said,

Understanding ourselves is something we are much less capable of doing. Many of us have limited capabilities at understanding how we come across and what we transmit to the other side. Emotions Analytics hold great potential in helping people get in tune with their own inner self – from tracking our happiness and emotional well-being to practicing our Valentine pitch, working on our assertiveness or leadership capabilities, and learning to be a more effective sales person.

Western culture makes it easy to push down and hide our emotions from each other, not to mention ourselves. Paul Ekman, Professor Emeritus of Psychology at UCSF found that many people often mute out the full expression of emotions that flash briefly or manifest less intensely as what he calls micro-expression and subtle expressions.

Personal experiments with older versions of Ekman’s training tools for identifying facial expressions made it easier to not only identify these emotions in other people, but to notice them with greater clarity in myself. Using these kinds of tools for emotional analysis promises to provide us with benchmarks for easily tracking our emotional state over time, and perhaps identifying behaviors and events that impact us in beneficial or adverse ways.

But this is not always an easy inquiry. As API Alchemy’s Chavez noted,

People have used this to look at emails to analyze their own emails for sentiment. What is funny is that people are more willing to shine the light on others and groups of people for marketing, but not as often do they put it on themselves to see if their emails have negative tones or their feet are going in the wrong direction.

George Lawton has been infinitely fascinated yet scared about the rise of cybernetic consciousness, which he has been covering for the last twenty years for publications like IEEE Computer, Wired, and many others. He keeps wondering if there is a way all this crazy technology can bring us closer together rather than eat us. Before that, he herded cattle in Australia, sailed a Chinese junk to Antarctica, and helped build Biosphere II. You can follow him on the Web and on Twitter @glawton.

George Lawton has been infinitely fascinated yet scared about the rise of cybernetic consciousness, which he has been covering for the last twenty years for publications like IEEE Computer, Wired, and many others. He keeps wondering if there is a way all this crazy technology can bring us closer together rather than eat us. Before that, he herded cattle in Australia, sailed a Chinese junk to Antarctica, and helped build Biosphere II. You can follow him on the Web and on Twitter @glawton.

2 Comments